Visualization

Professor Emeritus Dick Davison presents this exhibition of more than 30 paintings with three interwoven themes that have shaped his work over the past two decades: monuments and memorials that contend with time and human longing; visionary landscapes inspired by artists such as Charles Burchfield and Samuel Palmer, reflecting on nature as evidence of the divine; and Biblical narratives that point to enduring spiritual truths.

The Arlington native, who grew up in Granbury, is set to graduate Friday with a Bachelor of Science in Visualization.

Rollo received her undergraduate degree in Visualization in 2021. She is set to graduate Saturday with a Master of Fine Arts in Visualization.

The Round Rock native is set to graduate Friday with a Bachelor of Science in Visualization.

The Arlington native is set to graduate Saturday with a Master of Science in Visualization.

The student-run event featured an art exhibition, an interactive showcase, research symposium and a screening in the Rudder Complex.

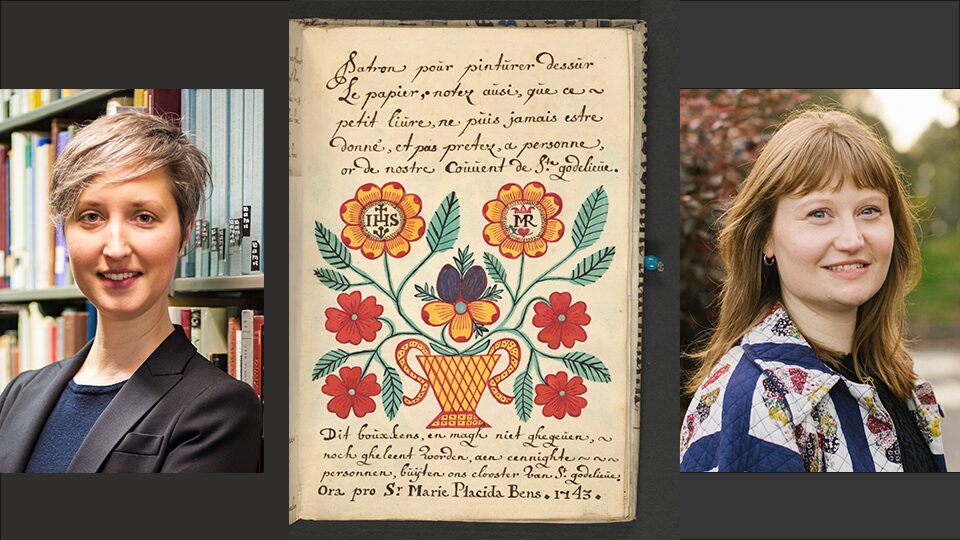

Join Tianna Uchacz, Ph.D., assistant professor in Visualization, and Sophie Pitman, Ph.D., University of Wisconsin-Madison, in a discussion about their work with a curious 18th-century French-Flemish manuscript in UW-Madison’s Special Collections. The translation of the manuscript reveals instructions for how to dye textiles and paper to create artificial flowers.

This public presentation on the reconstruction of historical textiles and fashion by the Glasscock Center’s Visiting Fellow Dr. Sophie Pitman (UW-Madison) features the opportunity to try Renaissance fabric finishing techniques. The textile researcher will explain what we can learn from incorporating hands-on experimentation into our archival, literary and visual analysis.

This student-run event is the 33rd-annual showcase of Visualization students’ work from the past year, including a gallery exhibition of physical works and a screening of time-based works.

This student-run event is the 33rd-annual showcase of Visualization students’ work from the past year, including a gallery exhibition of physical works and a screening of time-based works.